Mobile App Analytics: Metrics That Actually Matter

Mobile app analytics platforms generate hundreds of metrics, dashboards, and data points. The result for most developers isn't clarity — it's paralysis. When everything is measured, nothing is prioritized. Teams end up tracking vanity metrics that look impressive in reports but don't drive meaningful product or business decisions.

This guide cuts through the noise. It identifies the metrics that actually predict app success, explains how to measure them correctly, and shows you how to build an analytics framework that drives action — not just dashboards.

The Analytics Hierarchy: What to Measure and Why

Tier 1: North Star Metrics (Track Daily)

Every app needs a single North Star metric that best represents the value users get from the product. This metric should:

- Correlate directly with user satisfaction

- Predict long-term retention and revenue

- Be influenceable by product and marketing decisions

Examples by app type:

| App Type | North Star Metric | Why |

|---|---|---|

| Social media | Daily active users (DAU) | Measures core engagement loop |

| Meditation | Weekly meditation minutes | Measures habit formation |

| E-commerce | Monthly purchases per user | Measures buying behavior |

| SaaS/productivity | Weekly active actions | Measures functional engagement |

| Gaming | Daily sessions per user | Measures entertainment value |

| Fitness | Weekly workouts completed | Measures goal progress |

| News/content | Daily articles read | Measures content consumption |

Your North Star metric should be the first thing you check every morning. Everything else is context for understanding changes in this number.

Tier 2: Core Health Metrics (Track Weekly)

These metrics form the foundation of your app's health:

Retention Rates

Retention is the single most important category of metrics for any app. If users don't come back, nothing else matters.

Day 1 Retention: Percentage of new users who return the day after installing.

- Benchmark: 25-40% (varies by category)

- What it tells you: Is your onboarding effective? Do users understand your value proposition?

- Action threshold: Below 20% = critical onboarding problem

Day 7 Retention: Percentage of new users who return 7 days after installing.

- Benchmark: 10-20%

- What it tells you: Have users formed an initial habit? Does the app deliver recurring value?

- Action threshold: Below 8% = weak habit formation or missing core loop

Day 30 Retention: Percentage of new users who return 30 days after installing.

- Benchmark: 5-12%

- What it tells you: Has the app become part of the user's routine?

- Action threshold: Below 4% = long-term value proposition problem

Rolling Retention vs. Classic Retention: Classic retention measures if a user was active on exactly Day N. Rolling retention measures if a user was active on Day N or any day after. Rolling retention is usually more meaningful for non-daily-use apps.

Active Users

DAU (Daily Active Users): Users who open your app at least once per day.

WAU (Weekly Active Users): Users who open your app at least once per week.

MAU (Monthly Active Users): Users who open your app at least once per month.

DAU/MAU Ratio (Stickiness): The most revealing engagement metric. It measures what percentage of your monthly users are daily users.

- Benchmark: 15-25% for most apps, 40%+ for highly engaging apps (social, messaging)

- What it tells you: How "must-have" is your app in users' daily routine?

- Why it matters: High DAU/MAU ratio predicts long-term retention and monetization

Session Metrics

Sessions per user per day: How often users open your app.

- Benchmark: 1.5-3 for productivity apps, 3-8 for social/messaging apps

Average session duration: How long users spend per session.

- Benchmark: 3-10 minutes for utility apps, 10-30+ minutes for content/gaming apps

Session interval: Average time between sessions for the same user. Decreasing intervals indicate increasing engagement.

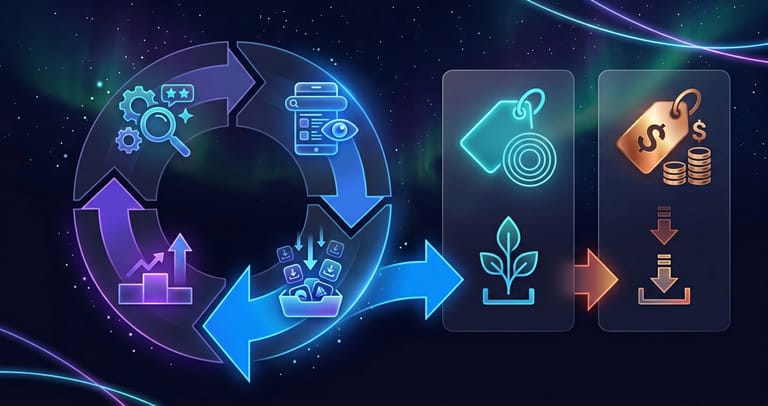

Tier 3: Growth Metrics (Track Monthly)

Acquisition Metrics

New installs: Total new downloads per period.

- Track by source: organic search, organic browse, paid, referral, web

- More useful when segmented by source than as an aggregate

Organic install share: Organic installs ÷ total installs.

- Target: 60-80% for a healthy growth model

- Below 40% = over-dependence on paid acquisition

Cost Per Install (CPI): Total marketing spend ÷ total installs from paid channels.

- iOS benchmark: $2-5 US

- Android benchmark: $0.50-2 US

- Track per channel and per campaign for optimization

Revenue Metrics

Average Revenue Per User (ARPU): Total revenue ÷ total active users.

- Track monthly and segment by user cohort

- ARPU should increase over time as your monetization matures

Average Revenue Per Paying User (ARPPU): Total revenue ÷ paying users only.

- Isolates monetization effectiveness from conversion rate

- Useful for optimizing pricing and premium features

Lifetime Value (LTV): Projected total revenue from a user over their entire lifetime.

- Calculate by cohort: LTV = ARPU × Average lifetime (in months)

- More sophisticated models use retention curves and monetization rate curves

- The most important metric for determining sustainable paid acquisition budgets

Monthly Recurring Revenue (MRR): For subscription apps, total active subscription revenue per month.

- Track new MRR, expansion MRR, churn MRR, and net MRR

- Growth rate of net MRR is the clearest indicator of business health

Conversion Metrics

Trial-to-Paid Conversion: Percentage of free trial users who become paying subscribers.

- Benchmark: 5-15% for most subscription apps

- Below 5% = pricing problem, paywall design issue, or insufficient value demonstration during trial

Free-to-Paid Conversion (Freemium): Percentage of free users who purchase premium.

- Benchmark: 2-5% for most freemium apps

- Optimize by identifying the "aha moment" that drives upgrades

Tier 4: Diagnostic Metrics (Track When Investigating Issues)

These metrics provide context when Tier 1-3 metrics change unexpectedly:

Technical Metrics

Crash Rate: Percentage of sessions that end in a crash.

- Target: Below 1% (below 0.5% is excellent)

- Both Apple and Google penalize high crash rates in rankings

App Load Time: Time from tap to usable state.

- Target: Under 3 seconds for cold start

- Every additional second reduces Day 1 retention by 2-5%

ANR Rate (Android): Application Not Responding events.

- Target: Below 0.5%

- Directly impacts Google Play ranking

Battery Usage: Your app's energy impact relative to category norms.

- Excessive battery drain leads to uninstalls and negative reviews

User Experience Metrics

Onboarding Completion Rate: Percentage of new users who complete your onboarding flow.

- Target: 70%+ completion

- Each unnecessary step reduces completion by 10-20%

Feature Adoption Rate: Percentage of users who try each key feature.

- Reveals which features users discover vs. which they miss

- Low adoption of a strong feature = discoverability problem

Screen Flow Drop-offs: Where users abandon key flows (purchase, signup, content creation).

- Identify and fix the highest-drop-off points for maximum impact

Setting Up Your Analytics Stack

Essential Tools

For most apps, you need three layers:

Layer 1: Store Analytics (Free)

- App Store Connect Analytics

- Google Play Console Statistics

- Provides: impressions, installs, conversion rates, source attribution, retention overview

Layer 2: Product Analytics

- Firebase Analytics (free, good for basics)

- Amplitude or Mixpanel (more powerful, paid for scale)

- Provides: event tracking, user journeys, funnels, retention cohorts, user segmentation

Layer 3: Revenue Analytics

- RevenueCat (for subscription apps)

- Your own backend reporting

- Provides: MRR, churn, trial conversion, LTV by cohort

What to Track (Event Taxonomy)

Define a clear event taxonomy before implementing. Core events for most apps:

Lifecycle events:

app_open— app launchedonboarding_started— began onboardingonboarding_completed— finished onboardingsignup_completed— created accountfirst_key_action— completed core action for the first time

Engagement events:

feature_used(with feature name property)content_viewed(with content type/ID)session_ended(with duration)search_performed(with query)

Monetization events:

paywall_viewedtrial_startedpurchase_completed(with product, price, currency)subscription_renewedsubscription_cancelled

Keep it lean. Track 20-40 core events, not 200. Every event you track has a maintenance cost. Focus on events that answer specific business questions.

Building an Analytics Dashboard

Weekly Dashboard Template

| Section | Metrics | Time Comparison |

|---|---|---|

| North Star | Your primary metric | vs. last week, vs. 4 weeks ago |

| Acquisition | New installs, organic %, CPI | vs. last week |

| Engagement | DAU, DAU/MAU ratio, sessions/user | vs. last week |

| Retention | D1, D7, D30 for latest cohort | vs. previous cohort |

| Revenue | MRR, ARPU, trial conversion | vs. last month |

| Health | Crash rate, app rating, new reviews | vs. last week |

Monthly Deep Dive

Add to the weekly dashboard:

- Cohort analysis: compare retention curves of this month's cohort vs. previous 3 months

- LTV projections: updated LTV estimates by acquisition source

- Feature impact: which features correlate most with retention?

- Funnel analysis: identify and quantify conversion bottlenecks

Common Analytics Mistakes

Tracking downloads instead of active users. Downloads are a vanity metric. An app with 1 million downloads and 10,000 MAU has a 1% active rate — that's a retention crisis, not a success story.

Not segmenting data. Aggregate metrics hide crucial differences. Retention for organic users vs. paid users, or US users vs. international users, can vary dramatically. Always segment.

Analyzing too short a time window. Weekly fluctuations are often noise. Look at 4-week trends minimum before concluding something has changed.

Measuring too many things. If your dashboard has 50 metrics, nobody is actually looking at it. Ruthlessly prioritize the 8-12 metrics that drive decisions.

Not connecting metrics to actions. Every metric on your dashboard should answer the question: "If this number goes up or down by 20%, what would we do differently?" If the answer is "nothing," remove it.

Ignoring cohort analysis. Aggregate retention rates are misleading because they blend old and new users. Always analyze retention by acquisition cohort to understand true trends.

Over-optimizing for engagement at the expense of user value. Push notifications can temporarily boost DAU but damage long-term retention if they're annoying. Optimize for sustainable engagement, not metric manipulation.

Connecting Analytics to ASO

Analytics data should directly inform your ASO strategy:

Retention by acquisition source → tells you which organic keywords bring quality users

Feature adoption data → reveals which features to highlight in screenshots and descriptions

Session patterns → inform when to trigger rating prompts (after positive engagement moments)

Conversion funnel data → identifies whether your store listing sets correct expectations (if organic users have poor retention, the listing may be misleading)

Geographic engagement → reveals which markets have the most engaged users, guiding ASO localization priorities

Conclusion

Mobile app analytics isn't about collecting data — it's about collecting the right data and using it to make better decisions. Start with your North Star metric and the core health metrics (retention, active users, sessions). Build a weekly dashboard that fits on one screen. Segment everything. And always connect metrics to actions.

The apps that win aren't necessarily the ones with the most sophisticated analytics stacks — they're the ones that consistently use a focused set of metrics to identify problems, test solutions, and measure impact. Simple analytics used well beats complex analytics ignored.